# Disaster recovery for Embedded Cluster (Alpha)

This topic describes the disaster recovery feature for Replicated Embedded Cluster, including how to enable disaster recovery for your application. It also describes how end users can configure disaster recovery in the Replicated KOTS Admin Console and restore from a backup.

:::important

Embedded Cluster disaster recovery is an Alpha feature. This feature is subject to change, including breaking changes. To get access to this feature, reach out to Alex Parker at [alexp@replicated.com](mailto:alexp@replicated.com).

:::

:::note

Embedded Cluster does not support backup and restore with the KOTS snapshots feature. For more information about using snapshots for existing cluster installations with KOTS, see [About Backup and Restore with Snapshots](/vendor/snapshots-overview).

:::

## Overview

The Embedded Cluster disaster recovery feature allows your customers to take backups from the Admin Console and perform restores from the command line. Disaster recovery for Embedded Cluster is implemented with Velero. For more information about Velero, see the [Velero](https://velero.io/docs/latest/) documentation.

The backups that your customers take from the Admin Console will include both the Embedded Cluster infrastructure and the application resources that you specify.

The Embedded Cluster infrastructure that is backed up includes components such as the KOTS Admin Console and the built-in registry that is deployed for air gap installations. No configuration is required to include Embedded Cluster infrastructure in backups. Vendors specify the application resources to include in backups by configuring a Velero Backup resource in the application release.

## Requirements

Embedded Cluster disaster recovery has the following requirements:

* The disaster recovery feature flag must be enabled for your account. To get access to disaster recovery, reach out to Alex Parker at [alexp@replicated.com](mailto:alexp@replicated.com).

* Embedded Cluster version 1.22.0 or later

* Backups must be stored in S3-compatible storage

## Limitations and known issues

Embedded Cluster disaster recovery has the following limitations and known issues:

* Embedded Cluster supports only AWS S3 as a storage location for backups.

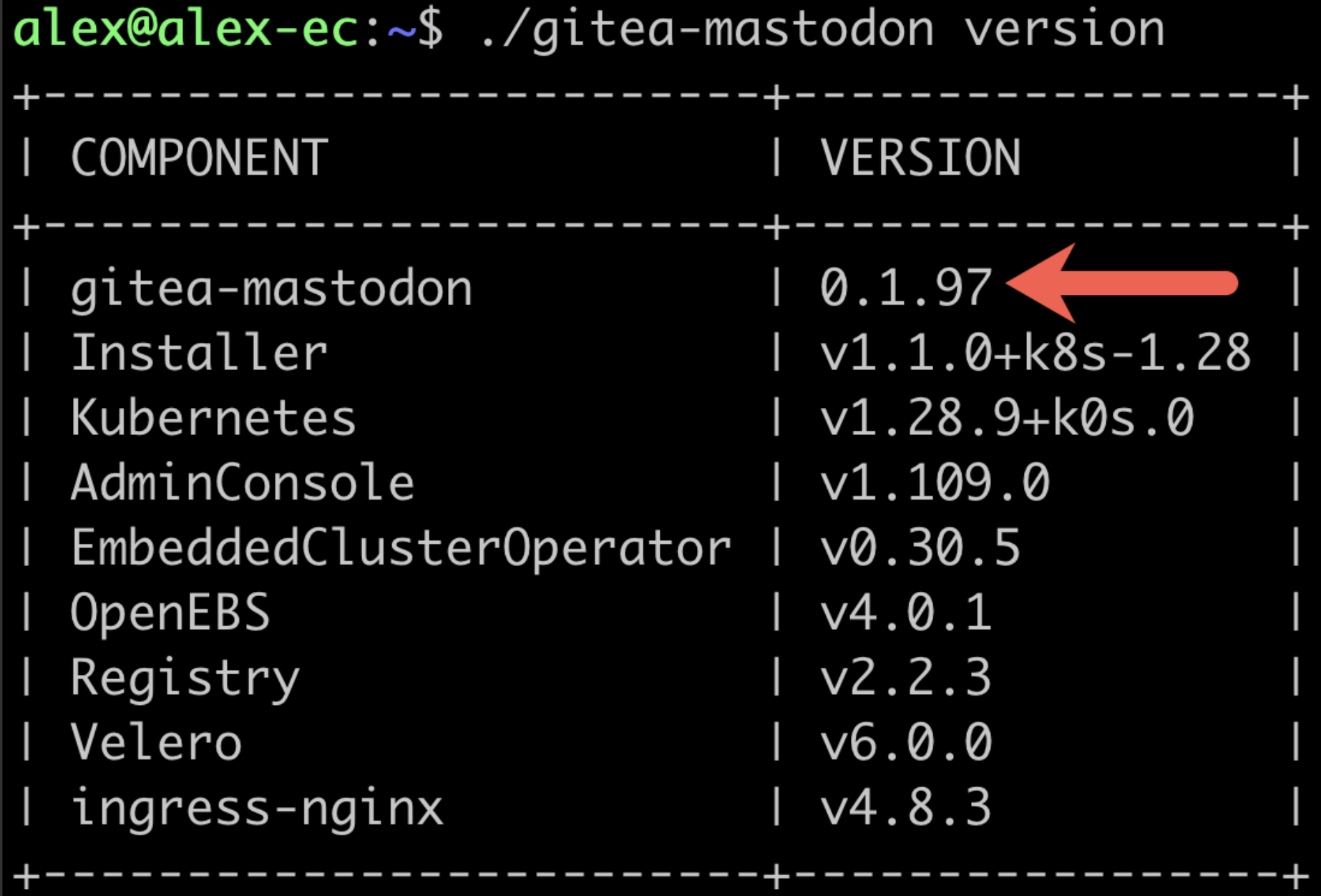

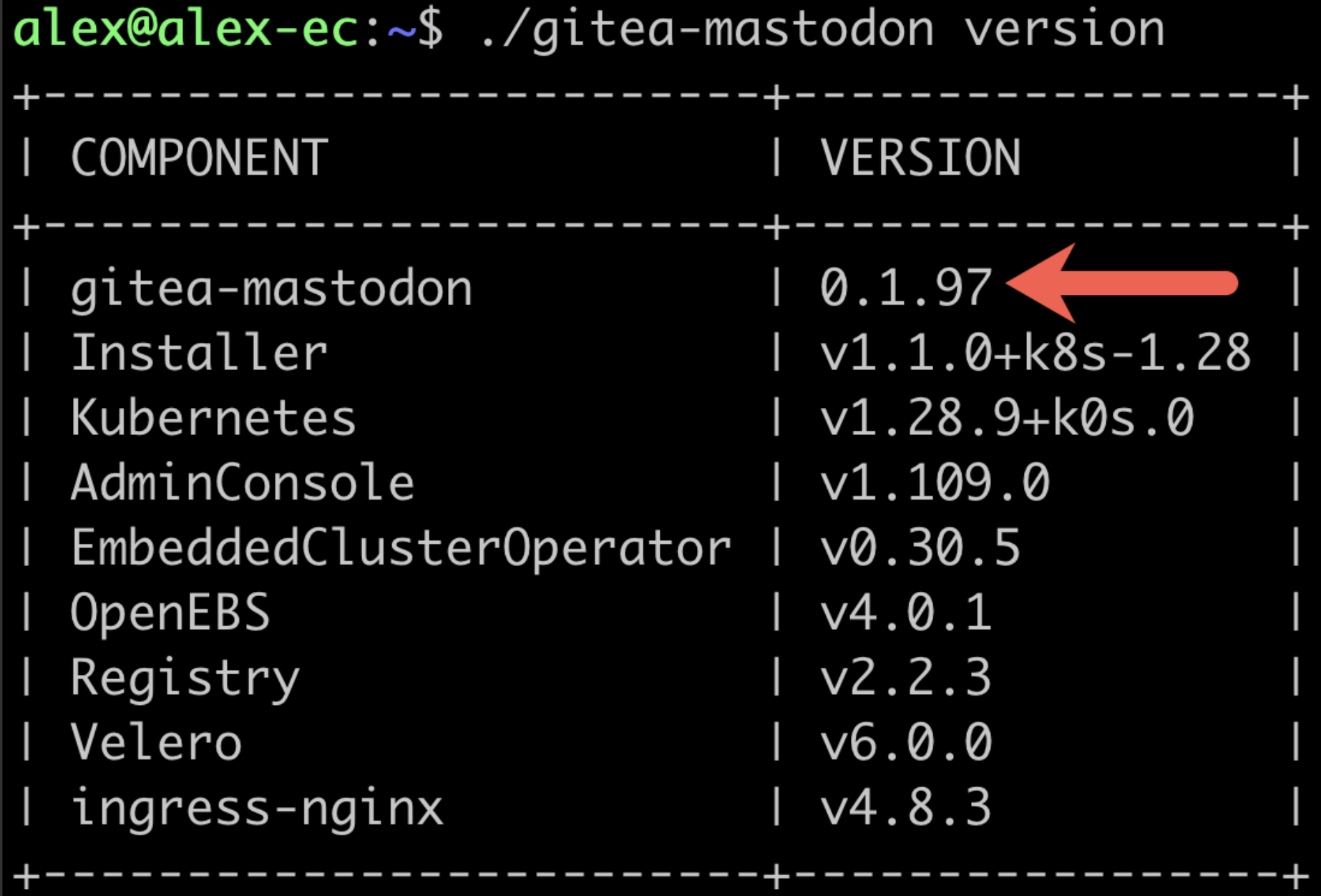

* During a restore, the version of the Embedded Cluster installation assets must match the version of the application in the backup. So if version 0.1.97 of your application was backed up, the Embedded Cluster installation assets for 0.1.97 must be used to perform the restore. Use `./APP_SLUG version` to check the version of the installation assets, where `APP_SLUG` is the unique application slug. For example:

[View a larger version of this image](/images/ec-version-command.png)

* Any Helm extensions included in the `extensions` field of the Embedded Cluster Config are _not_ included in backups. Helm extensions are reinstalled as part of the restore process. To include Helm extensions in backups, configure the Velero Backup resource to include the extensions using namespace-based or label-based selection. For more information, see [Configure the Velero Custom Resources](#config-velero-resources) below.

* Users can only restore from the most recent backup.

* Velero is installed only during the initial installation process. Enabling the disaster recovery license field for customers after they have already installed will not do anything.

* If the `--admin-console-port` flag was used during install to change the port for the Admin Console, note that during a restore the Admin Console port will be used from the backup and cannot be changed. For more information, see [install](/reference/embedded-cluster-install).

## Configure disaster recovery

This section describes how to configure disaster recovery for Embedded Cluster installations. It also describes how to enable access to the disaster recovery feature on a per-customer basis.

### Configure the Velero custom resources {#config-velero-resources}

This section describes how to set up Embedded Cluster disaster recovery for your application by configuring Velero [Backup](https://velero.io/docs/latest/api-types/backup/) and [Restore](https://velero.io/docs/latest/api-types/restore/) custom resources in a release.

To configure Velero Backup and Restore custom resources for Embedded Cluster disaster recovery:

1. In a new release containing your application files, add a Velero Backup resource. In the Backup resource, use namespace-based or label-based selection to indicate the application resources that you want to be included in the backup. For more information, see [Backup API Type](https://velero.io/docs/latest/api-types/backup/) in the Velero documentation.

:::important

If you use namespace-based selection to include all of your application resources deployed in the `kotsadm` namespace, ensure that you exclude the Replicated resources that are also deployed in the `kotsadm` namespace. Because the Embedded Cluster infrastructure components are always included in backups automatically, this avoids duplication.

:::

**Example:**

The following Backup resource uses namespace-based selection to include application resources deployed in the `kotsadm` namespace:

```yaml

apiVersion: velero.io/v1

kind: Backup

metadata:

name: backup

spec:

# Back up the resources in the kotsadm namespace

includedNamespaces:

- kotsadm

orLabelSelectors:

- matchExpressions:

# Exclude Replicated resources from the backup

- { key: kots.io/kotsadm, operator: NotIn, values: ["true"] }

```

1. In the same release, add a Velero Restore resource. In the `backupName` field of the Restore resource, include the name of the Backup resource that you created. For more information, see [Restore API Type](https://velero.io/docs/latest/api-types/restore/) in the Velero documentation.

**Example**:

```yaml

apiVersion: velero.io/v1

kind: Restore

metadata:

name: restore

spec:

# the name of the Backup resource that you created

backupName: backup

includedNamespaces:

- '*'

```

1. For any image names that you include in your Backup and Restore resources, rewrite the image name using the Replicated KOTS [HasLocalRegistry](/reference/template-functions-config-context#haslocalregistry), [LocalRegistryHost](/reference/template-functions-config-context#localregistryhost), and [LocalRegistryNamespace](/reference/template-functions-config-context#localregistrynamespace) template functions. This ensures that the image name is rendered correctly during deployment, allowing the image to be pulled from the user's local image registry (such as in air gap installations) or through the Replicated proxy registry.

**Example:**

```yaml

apiVersion: velero.io/v1

kind: Restore

metadata:

name: restore

spec:

hooks:

resources:

- name: restore-hook-1

includedNamespaces:

- kotsadm

labelSelector:

matchLabels:

app: example

postHooks:

- init:

initContainers:

- name: restore-hook-init1

image:

# Use HasLocalRegistry, LocalRegistryHost, and LocalRegistryNamespace

# to template the image name

registry: '{{repl HasLocalRegistry | ternary LocalRegistryHost "proxy.replicated.com" }}'

repository: '{{repl HasLocalRegistry | ternary LocalRegistryNamespace "proxy/my-app/quay.io/my-org" }}/nginx'

tag: 1.24-alpine

```

For more information about how to rewrite image names using the KOTS [HasLocalRegistry](/reference/template-functions-config-context#haslocalregistry), [LocalRegistryHost](/reference/template-functions-config-context#localregistryhost), and [LocalRegistryNamespace](/reference/template-functions-config-context#localregistrynamespace) template functions, including additional examples, see [Support Installations with HelmChart v2](/vendor/helm-native-v2-using).

1. If you support air gap installations, add any images that are referenced in your Backup and Restore resources to the `additionalImages` field of the KOTS Application custom resource. This ensures that the images are included in the air gap bundle for the release so they can be used during the backup and restore process in environments with limited or no outbound internet access. For more information, see [additionalImages](/reference/custom-resource-application#additionalimages) in _Application_.

**Example:**

```yaml

apiVersion: kots.io/v1beta1

kind: Application

metadata:

name: my-app

spec:

additionalImages:

- elasticsearch:7.6.0

- quay.io/orgname/private-image:v1.2.3

```

1. (Optional) Use Velero functionality like [backup](https://velero.io/docs/main/backup-hooks/) and [restore](https://velero.io/docs/main/restore-hooks/) hooks to customize the backup and restore process as needed.

**Example:**

For example, a Postgres database might be backed up using pg_dump to extract the database into a file as part of a backup hook. It can then be restored using the file in a restore hook:

```yaml

podAnnotations:

backup.velero.io/backup-volumes: backup

pre.hook.backup.velero.io/command: '["/bin/bash", "-c", "PGPASSWORD=$POSTGRES_PASSWORD pg_dump -U {{repl ConfigOption "postgresql_username" }} -d {{repl ConfigOption "postgresql_database" }} -h 127.0.0.1 > /scratch/backup.sql"]'

pre.hook.backup.velero.io/timeout: 3m

post.hook.restore.velero.io/command: '["/bin/bash", "-c", "[ -f \"/scratch/backup.sql\" ] && PGPASSWORD=$POSTGRES_PASSWORD psql -U {{repl ConfigOption "postgresql_username" }} -h 127.0.0.1 -d {{repl ConfigOption "postgresql_database" }} -f /scratch/backup.sql && rm -f /scratch/backup.sql;"]'

post.hook.restore.velero.io/wait-for-ready: 'true' # waits for the pod to be ready before running the post-restore hook

```

1. Save and the promote the release to a development channel for testing.

### Extend disaster recovery with custom Velero plugins

> Introduced in Embedded Cluster v2.13.0

You can extend Velero's backup and restore capabilities by configuring custom Velero plugins in the Embedded Cluster Config. This is useful if you need to add support for specific backup scenarios, such as backing up databases like PostgreSQL, MySQL, or other stateful applications that require advanced disaster recovery capabilities.

To configure custom Velero plugins, use the `extensions.velero.plugins` key in the Embedded Cluster Config. For more information, see [Configure Velero Plugins](/reference/embedded-config#configure-velero-plugins) in _Embedded Cluster Config_.

### Enable the disaster recovery feature for your customers

After configuring disaster recovery for your application, you can enable it on a per-customer basis with the **Allow Disaster Recovery (Alpha)** license field.

To enable disaster recovery for a customer:

1. In the Vendor Portal, go to the [Customers](https://vendor.replicated.com/customers) page and select the target customer.

1. On the **Manage customer** page, under **License options**, enable the **Allow Disaster Recovery (Alpha)** field.

When your customer installs with Embedded Cluster, Velero will be deployed if the **Allow Disaster Recovery (Alpha)** license field is enabled.

## Take backups and restore

This section describes how your customers can configure backup storage, take backups, and restore from backups.

### Configure backup storage and take backups in the Admin Console

Customers with the **Allow Disaster Recovery (Alpha)** license field can configure their backup storage location and take backups from the Admin Console.

To configure backup storage and take backups:

1. After installing the application and logging in to the Admin Console, click the **Disaster Recovery** tab at the top of the Admin Console.

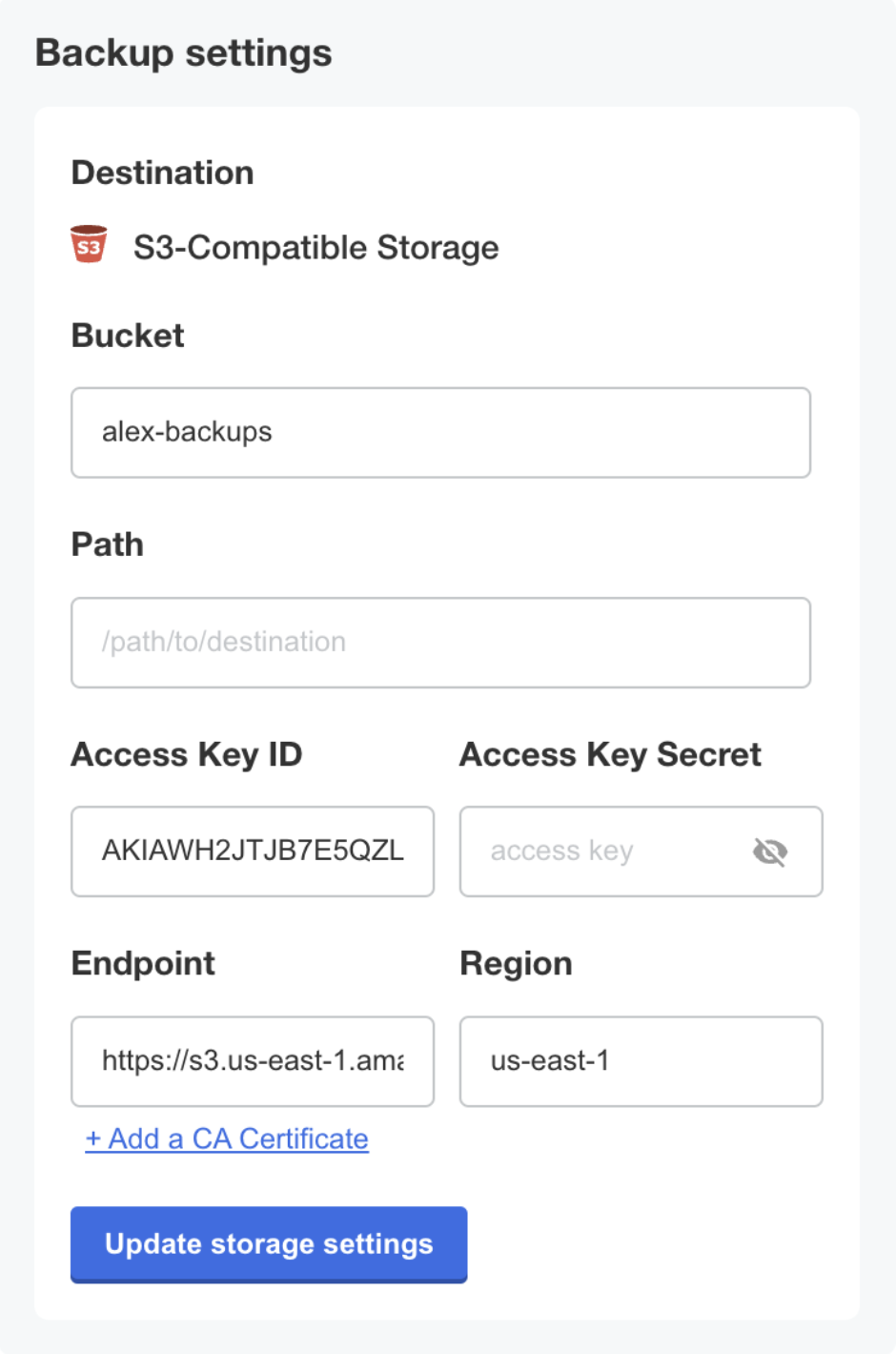

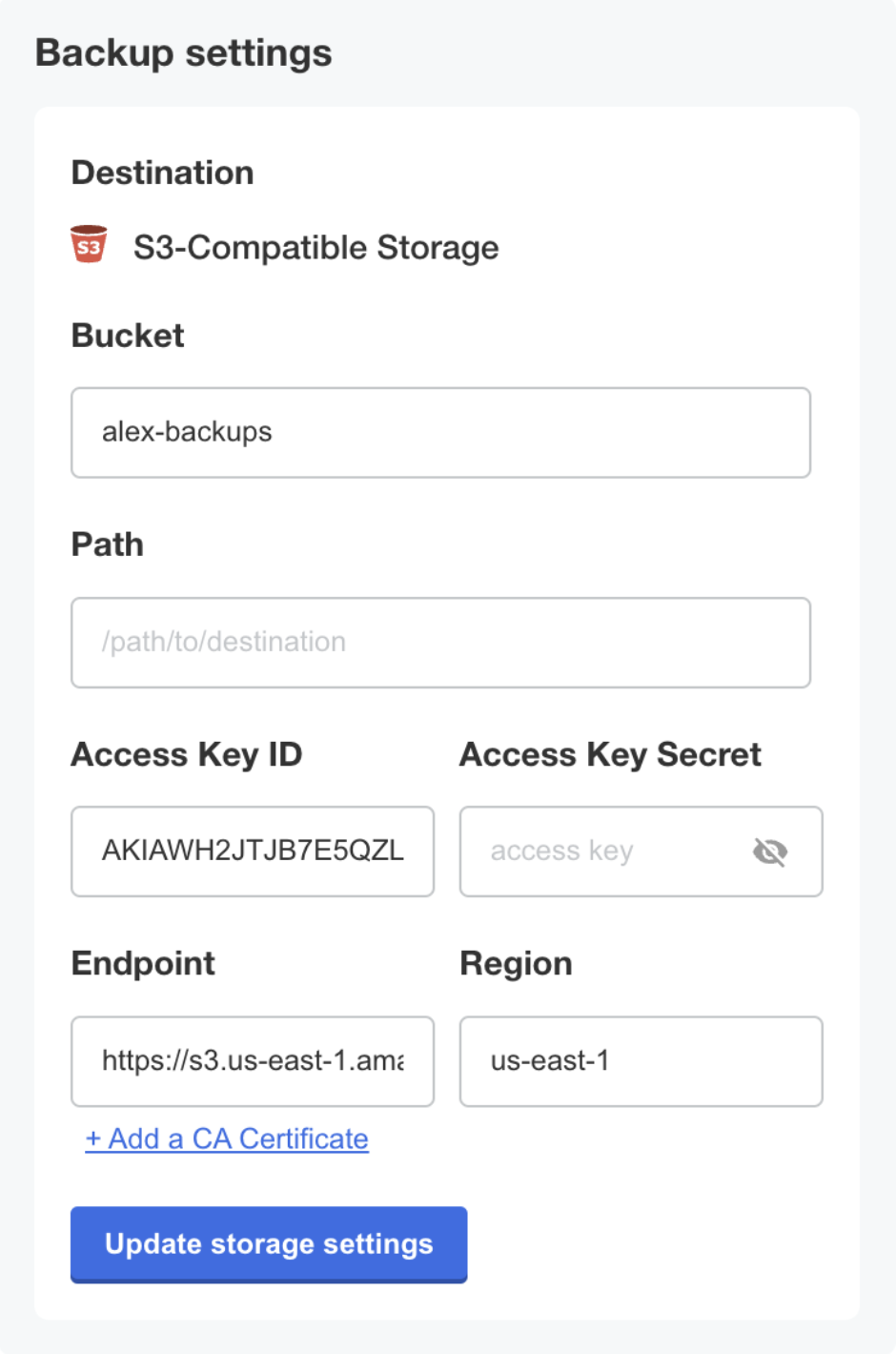

1. For the desired S3-compatible backup storage location, enter the bucket, prefix (optional), access key ID, access key secret, endpoint, and region. Click **Update storage settings**.

[View a larger version of this image](/images/ec-version-command.png)

* Any Helm extensions included in the `extensions` field of the Embedded Cluster Config are _not_ included in backups. Helm extensions are reinstalled as part of the restore process. To include Helm extensions in backups, configure the Velero Backup resource to include the extensions using namespace-based or label-based selection. For more information, see [Configure the Velero Custom Resources](#config-velero-resources) below.

* Users can only restore from the most recent backup.

* Velero is installed only during the initial installation process. Enabling the disaster recovery license field for customers after they have already installed will not do anything.

* If the `--admin-console-port` flag was used during install to change the port for the Admin Console, note that during a restore the Admin Console port will be used from the backup and cannot be changed. For more information, see [install](/reference/embedded-cluster-install).

## Configure disaster recovery

This section describes how to configure disaster recovery for Embedded Cluster installations. It also describes how to enable access to the disaster recovery feature on a per-customer basis.

### Configure the Velero custom resources {#config-velero-resources}

This section describes how to set up Embedded Cluster disaster recovery for your application by configuring Velero [Backup](https://velero.io/docs/latest/api-types/backup/) and [Restore](https://velero.io/docs/latest/api-types/restore/) custom resources in a release.

To configure Velero Backup and Restore custom resources for Embedded Cluster disaster recovery:

1. In a new release containing your application files, add a Velero Backup resource. In the Backup resource, use namespace-based or label-based selection to indicate the application resources that you want to be included in the backup. For more information, see [Backup API Type](https://velero.io/docs/latest/api-types/backup/) in the Velero documentation.

:::important

If you use namespace-based selection to include all of your application resources deployed in the `kotsadm` namespace, ensure that you exclude the Replicated resources that are also deployed in the `kotsadm` namespace. Because the Embedded Cluster infrastructure components are always included in backups automatically, this avoids duplication.

:::

**Example:**

The following Backup resource uses namespace-based selection to include application resources deployed in the `kotsadm` namespace:

```yaml

apiVersion: velero.io/v1

kind: Backup

metadata:

name: backup

spec:

# Back up the resources in the kotsadm namespace

includedNamespaces:

- kotsadm

orLabelSelectors:

- matchExpressions:

# Exclude Replicated resources from the backup

- { key: kots.io/kotsadm, operator: NotIn, values: ["true"] }

```

1. In the same release, add a Velero Restore resource. In the `backupName` field of the Restore resource, include the name of the Backup resource that you created. For more information, see [Restore API Type](https://velero.io/docs/latest/api-types/restore/) in the Velero documentation.

**Example**:

```yaml

apiVersion: velero.io/v1

kind: Restore

metadata:

name: restore

spec:

# the name of the Backup resource that you created

backupName: backup

includedNamespaces:

- '*'

```

1. For any image names that you include in your Backup and Restore resources, rewrite the image name using the Replicated KOTS [HasLocalRegistry](/reference/template-functions-config-context#haslocalregistry), [LocalRegistryHost](/reference/template-functions-config-context#localregistryhost), and [LocalRegistryNamespace](/reference/template-functions-config-context#localregistrynamespace) template functions. This ensures that the image name is rendered correctly during deployment, allowing the image to be pulled from the user's local image registry (such as in air gap installations) or through the Replicated proxy registry.

**Example:**

```yaml

apiVersion: velero.io/v1

kind: Restore

metadata:

name: restore

spec:

hooks:

resources:

- name: restore-hook-1

includedNamespaces:

- kotsadm

labelSelector:

matchLabels:

app: example

postHooks:

- init:

initContainers:

- name: restore-hook-init1

image:

# Use HasLocalRegistry, LocalRegistryHost, and LocalRegistryNamespace

# to template the image name

registry: '{{repl HasLocalRegistry | ternary LocalRegistryHost "proxy.replicated.com" }}'

repository: '{{repl HasLocalRegistry | ternary LocalRegistryNamespace "proxy/my-app/quay.io/my-org" }}/nginx'

tag: 1.24-alpine

```

For more information about how to rewrite image names using the KOTS [HasLocalRegistry](/reference/template-functions-config-context#haslocalregistry), [LocalRegistryHost](/reference/template-functions-config-context#localregistryhost), and [LocalRegistryNamespace](/reference/template-functions-config-context#localregistrynamespace) template functions, including additional examples, see [Support Installations with HelmChart v2](/vendor/helm-native-v2-using).

1. If you support air gap installations, add any images that are referenced in your Backup and Restore resources to the `additionalImages` field of the KOTS Application custom resource. This ensures that the images are included in the air gap bundle for the release so they can be used during the backup and restore process in environments with limited or no outbound internet access. For more information, see [additionalImages](/reference/custom-resource-application#additionalimages) in _Application_.

**Example:**

```yaml

apiVersion: kots.io/v1beta1

kind: Application

metadata:

name: my-app

spec:

additionalImages:

- elasticsearch:7.6.0

- quay.io/orgname/private-image:v1.2.3

```

1. (Optional) Use Velero functionality like [backup](https://velero.io/docs/main/backup-hooks/) and [restore](https://velero.io/docs/main/restore-hooks/) hooks to customize the backup and restore process as needed.

**Example:**

For example, a Postgres database might be backed up using pg_dump to extract the database into a file as part of a backup hook. It can then be restored using the file in a restore hook:

```yaml

podAnnotations:

backup.velero.io/backup-volumes: backup

pre.hook.backup.velero.io/command: '["/bin/bash", "-c", "PGPASSWORD=$POSTGRES_PASSWORD pg_dump -U {{repl ConfigOption "postgresql_username" }} -d {{repl ConfigOption "postgresql_database" }} -h 127.0.0.1 > /scratch/backup.sql"]'

pre.hook.backup.velero.io/timeout: 3m

post.hook.restore.velero.io/command: '["/bin/bash", "-c", "[ -f \"/scratch/backup.sql\" ] && PGPASSWORD=$POSTGRES_PASSWORD psql -U {{repl ConfigOption "postgresql_username" }} -h 127.0.0.1 -d {{repl ConfigOption "postgresql_database" }} -f /scratch/backup.sql && rm -f /scratch/backup.sql;"]'

post.hook.restore.velero.io/wait-for-ready: 'true' # waits for the pod to be ready before running the post-restore hook

```

1. Save and the promote the release to a development channel for testing.

### Extend disaster recovery with custom Velero plugins

> Introduced in Embedded Cluster v2.13.0

You can extend Velero's backup and restore capabilities by configuring custom Velero plugins in the Embedded Cluster Config. This is useful if you need to add support for specific backup scenarios, such as backing up databases like PostgreSQL, MySQL, or other stateful applications that require advanced disaster recovery capabilities.

To configure custom Velero plugins, use the `extensions.velero.plugins` key in the Embedded Cluster Config. For more information, see [Configure Velero Plugins](/reference/embedded-config#configure-velero-plugins) in _Embedded Cluster Config_.

### Enable the disaster recovery feature for your customers

After configuring disaster recovery for your application, you can enable it on a per-customer basis with the **Allow Disaster Recovery (Alpha)** license field.

To enable disaster recovery for a customer:

1. In the Vendor Portal, go to the [Customers](https://vendor.replicated.com/customers) page and select the target customer.

1. On the **Manage customer** page, under **License options**, enable the **Allow Disaster Recovery (Alpha)** field.

When your customer installs with Embedded Cluster, Velero will be deployed if the **Allow Disaster Recovery (Alpha)** license field is enabled.

## Take backups and restore

This section describes how your customers can configure backup storage, take backups, and restore from backups.

### Configure backup storage and take backups in the Admin Console

Customers with the **Allow Disaster Recovery (Alpha)** license field can configure their backup storage location and take backups from the Admin Console.

To configure backup storage and take backups:

1. After installing the application and logging in to the Admin Console, click the **Disaster Recovery** tab at the top of the Admin Console.

1. For the desired S3-compatible backup storage location, enter the bucket, prefix (optional), access key ID, access key secret, endpoint, and region. Click **Update storage settings**.

[View a larger version of this image](/images/dr-backup-storage-settings.png)

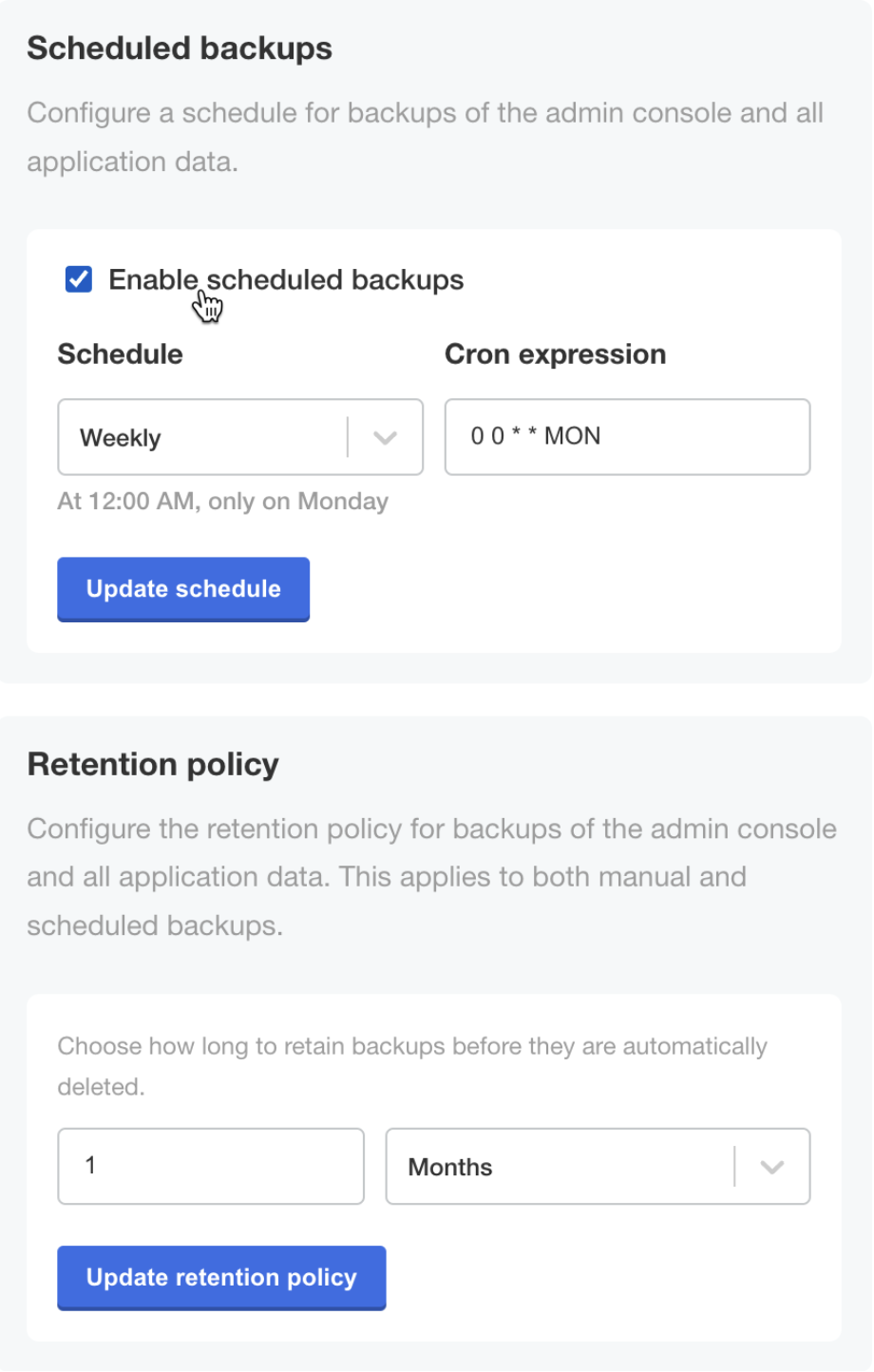

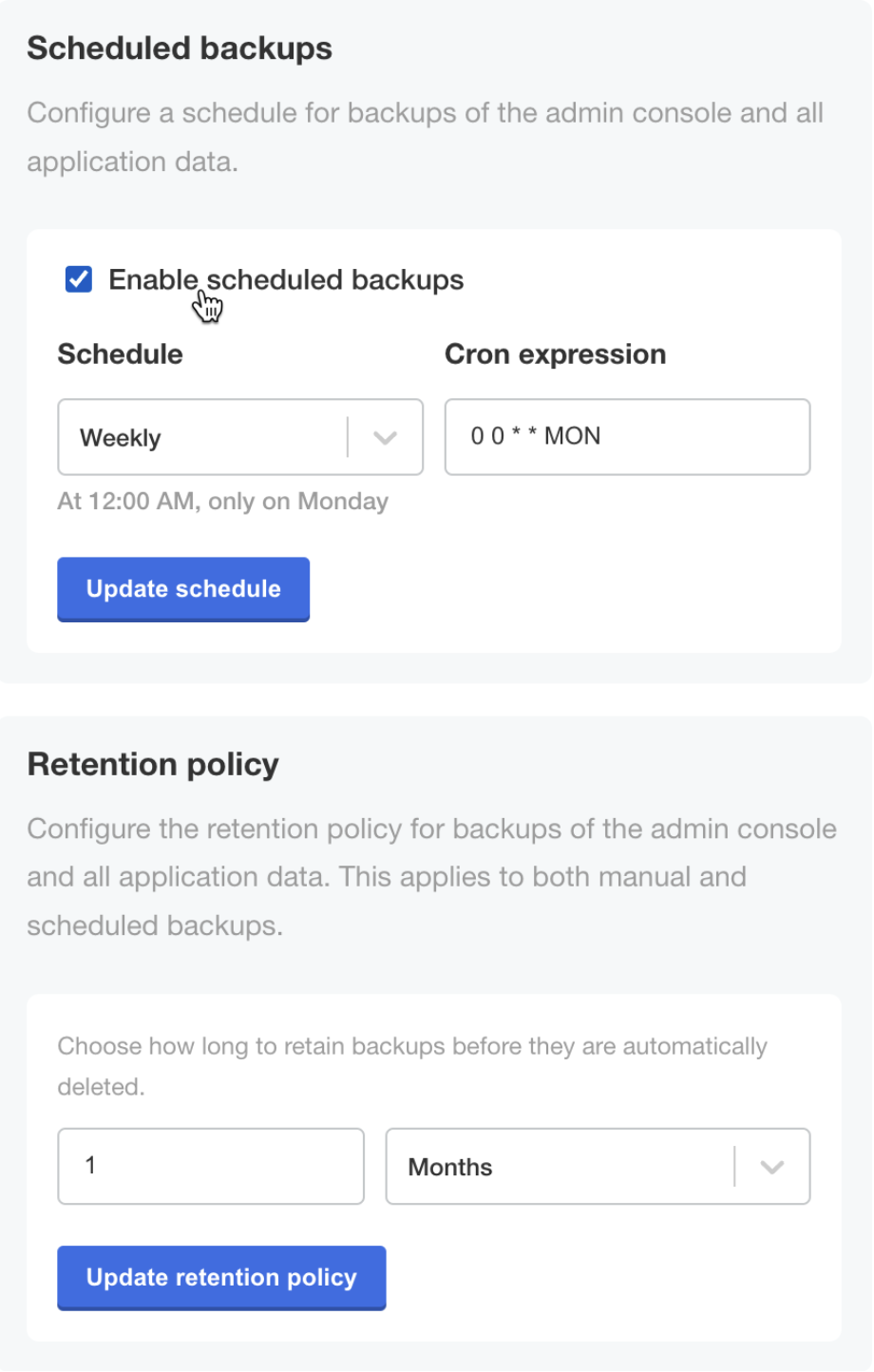

1. (Optional) From this same page, configure scheduled backups and a retention policy for backups.

[View a larger version of this image](/images/dr-backup-storage-settings.png)

1. (Optional) From this same page, configure scheduled backups and a retention policy for backups.

[View a larger version of this image](/images/dr-scheduled-backups.png)

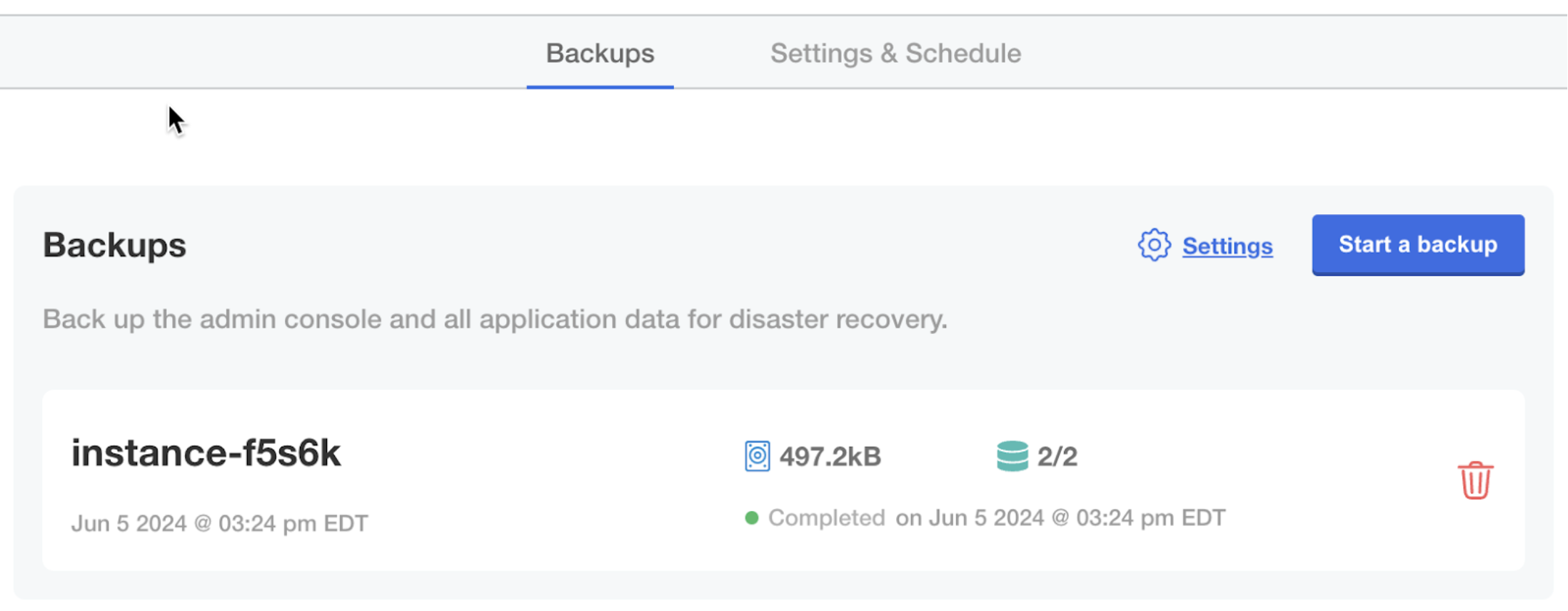

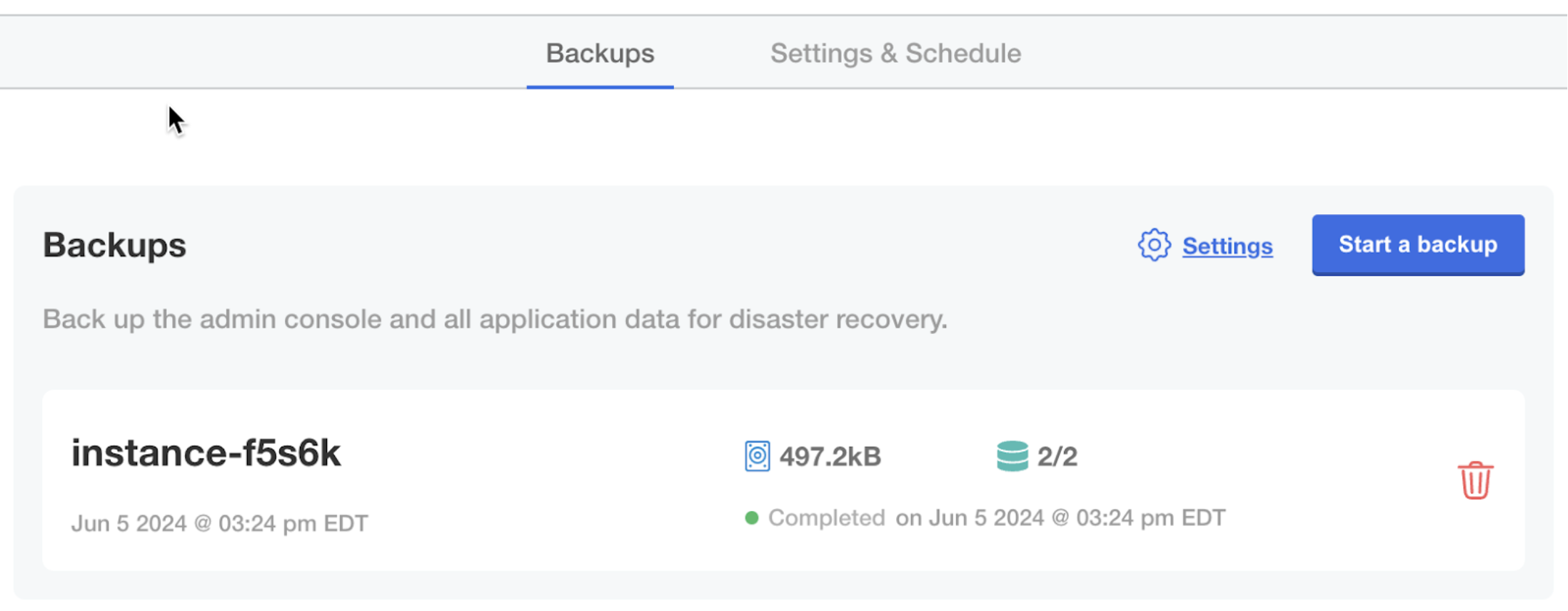

1. In the **Disaster Recovery** submenu, click **Backups**. Backups can be taken from this screen.

[View a larger version of this image](/images/dr-scheduled-backups.png)

1. In the **Disaster Recovery** submenu, click **Backups**. Backups can be taken from this screen.

[View a larger version of this image](/images/dr-backups.png)

### Restore from a backup

To restore from a backup:

1. SSH onto a new machine where you want to restore from a backup.

1. Download the Embedded Cluster installation assets for the version of the application that was included in the backup. You can find the command for downloading Embedded Cluster installation assets in the **Embedded Cluster install instructions dialog** for the customer. For more information, [Online Installation with Embedded Cluster](/enterprise/installing-embedded).

:::note

The version of the Embedded Cluster installation assets must match the version that is in the backup. For more information, see [Limitations and Known Issues](#limitations-and-known-issues).

:::

1. Run the restore command:

```bash

sudo ./APP_SLUG restore

```

Where `APP_SLUG` is the unique application slug.

Note the following requirements and guidance for the `restore` command:

* If the installation is behind a proxy, the same proxy settings provided during install must be provided to the restore command using `--http-proxy`, `--https-proxy`, and `--no-proxy`. For more information, see [install](/reference/embedded-cluster-install).

* If the `--cidr` flag was used during install to the set IP address ranges for Pods and Services, this flag must be provided with the same CIDR during the restore. If this flag is not provided or is provided with a different CIDR, the restore will fail with an error message telling you to rerun with the appropriate value. However, it will take some time before that error occurs. For more information, see [install](/reference/embedded-cluster-install).

* If the `--local-artifact-mirror-port` flag was used during install to change the port for the Local Artifact Mirror (LAM), you can optionally use the `--local-artifact-mirror-port` flag to choose a different LAM port during restore. For example, `restore --local-artifact-mirror-port=50000`. If no LAM port is provided during restore, the LAM port that was supplied during installation will be used. For more information, see [install](/reference/embedded-cluster-install).

You will be guided through the process of restoring from a backup.

1. When prompted, enter the information for the backup storage location.

[View a larger version of this image](/images/dr-restore.png)

1. When prompted, confirm that you want to restore from the detected backup.

[View a larger version of this image](/images/dr-restore-from-backup-confirmation.png)

After some time, the Admin console URL is displayed:

[View a larger version of this image](/images/dr-restore-admin-console-url.png)

1. (Optional) If the cluster should have multiple nodes, go to the Admin Console to get a join command and join additional nodes to the cluster. For more information, see [Manage Multi-Node Clusters with Embedded Cluster](/enterprise/embedded-manage-nodes).

1. Type `continue` when you are ready to proceed with the restore process.

[View a larger version of this image](/images/dr-restore-continue.png)

After some time, the restore process completes.

If the `restore` command is interrupted during the restore process, you can resume by rerunning the `restore` command and selecting to resume the previous restore. This is useful if your SSH session is interrupted during the restore.

[View a larger version of this image](/images/dr-backups.png)

### Restore from a backup

To restore from a backup:

1. SSH onto a new machine where you want to restore from a backup.

1. Download the Embedded Cluster installation assets for the version of the application that was included in the backup. You can find the command for downloading Embedded Cluster installation assets in the **Embedded Cluster install instructions dialog** for the customer. For more information, [Online Installation with Embedded Cluster](/enterprise/installing-embedded).

:::note

The version of the Embedded Cluster installation assets must match the version that is in the backup. For more information, see [Limitations and Known Issues](#limitations-and-known-issues).

:::

1. Run the restore command:

```bash

sudo ./APP_SLUG restore

```

Where `APP_SLUG` is the unique application slug.

Note the following requirements and guidance for the `restore` command:

* If the installation is behind a proxy, the same proxy settings provided during install must be provided to the restore command using `--http-proxy`, `--https-proxy`, and `--no-proxy`. For more information, see [install](/reference/embedded-cluster-install).

* If the `--cidr` flag was used during install to the set IP address ranges for Pods and Services, this flag must be provided with the same CIDR during the restore. If this flag is not provided or is provided with a different CIDR, the restore will fail with an error message telling you to rerun with the appropriate value. However, it will take some time before that error occurs. For more information, see [install](/reference/embedded-cluster-install).

* If the `--local-artifact-mirror-port` flag was used during install to change the port for the Local Artifact Mirror (LAM), you can optionally use the `--local-artifact-mirror-port` flag to choose a different LAM port during restore. For example, `restore --local-artifact-mirror-port=50000`. If no LAM port is provided during restore, the LAM port that was supplied during installation will be used. For more information, see [install](/reference/embedded-cluster-install).

You will be guided through the process of restoring from a backup.

1. When prompted, enter the information for the backup storage location.

[View a larger version of this image](/images/dr-restore.png)

1. When prompted, confirm that you want to restore from the detected backup.

[View a larger version of this image](/images/dr-restore-from-backup-confirmation.png)

After some time, the Admin console URL is displayed:

[View a larger version of this image](/images/dr-restore-admin-console-url.png)

1. (Optional) If the cluster should have multiple nodes, go to the Admin Console to get a join command and join additional nodes to the cluster. For more information, see [Manage Multi-Node Clusters with Embedded Cluster](/enterprise/embedded-manage-nodes).

1. Type `continue` when you are ready to proceed with the restore process.

[View a larger version of this image](/images/dr-restore-continue.png)

After some time, the restore process completes.

If the `restore` command is interrupted during the restore process, you can resume by rerunning the `restore` command and selecting to resume the previous restore. This is useful if your SSH session is interrupted during the restore. [View a larger version of this image](/images/ec-version-command.png)

* Any Helm extensions included in the `extensions` field of the Embedded Cluster Config are _not_ included in backups. Helm extensions are reinstalled as part of the restore process. To include Helm extensions in backups, configure the Velero Backup resource to include the extensions using namespace-based or label-based selection. For more information, see [Configure the Velero Custom Resources](#config-velero-resources) below.

* Users can only restore from the most recent backup.

* Velero is installed only during the initial installation process. Enabling the disaster recovery license field for customers after they have already installed will not do anything.

* If the `--admin-console-port` flag was used during install to change the port for the Admin Console, note that during a restore the Admin Console port will be used from the backup and cannot be changed. For more information, see [install](/reference/embedded-cluster-install).

## Configure disaster recovery

This section describes how to configure disaster recovery for Embedded Cluster installations. It also describes how to enable access to the disaster recovery feature on a per-customer basis.

### Configure the Velero custom resources {#config-velero-resources}

This section describes how to set up Embedded Cluster disaster recovery for your application by configuring Velero [Backup](https://velero.io/docs/latest/api-types/backup/) and [Restore](https://velero.io/docs/latest/api-types/restore/) custom resources in a release.

To configure Velero Backup and Restore custom resources for Embedded Cluster disaster recovery:

1. In a new release containing your application files, add a Velero Backup resource. In the Backup resource, use namespace-based or label-based selection to indicate the application resources that you want to be included in the backup. For more information, see [Backup API Type](https://velero.io/docs/latest/api-types/backup/) in the Velero documentation.

:::important

If you use namespace-based selection to include all of your application resources deployed in the `kotsadm` namespace, ensure that you exclude the Replicated resources that are also deployed in the `kotsadm` namespace. Because the Embedded Cluster infrastructure components are always included in backups automatically, this avoids duplication.

:::

**Example:**

The following Backup resource uses namespace-based selection to include application resources deployed in the `kotsadm` namespace:

```yaml

apiVersion: velero.io/v1

kind: Backup

metadata:

name: backup

spec:

# Back up the resources in the kotsadm namespace

includedNamespaces:

- kotsadm

orLabelSelectors:

- matchExpressions:

# Exclude Replicated resources from the backup

- { key: kots.io/kotsadm, operator: NotIn, values: ["true"] }

```

1. In the same release, add a Velero Restore resource. In the `backupName` field of the Restore resource, include the name of the Backup resource that you created. For more information, see [Restore API Type](https://velero.io/docs/latest/api-types/restore/) in the Velero documentation.

**Example**:

```yaml

apiVersion: velero.io/v1

kind: Restore

metadata:

name: restore

spec:

# the name of the Backup resource that you created

backupName: backup

includedNamespaces:

- '*'

```

1. For any image names that you include in your Backup and Restore resources, rewrite the image name using the Replicated KOTS [HasLocalRegistry](/reference/template-functions-config-context#haslocalregistry), [LocalRegistryHost](/reference/template-functions-config-context#localregistryhost), and [LocalRegistryNamespace](/reference/template-functions-config-context#localregistrynamespace) template functions. This ensures that the image name is rendered correctly during deployment, allowing the image to be pulled from the user's local image registry (such as in air gap installations) or through the Replicated proxy registry.

**Example:**

```yaml

apiVersion: velero.io/v1

kind: Restore

metadata:

name: restore

spec:

hooks:

resources:

- name: restore-hook-1

includedNamespaces:

- kotsadm

labelSelector:

matchLabels:

app: example

postHooks:

- init:

initContainers:

- name: restore-hook-init1

image:

# Use HasLocalRegistry, LocalRegistryHost, and LocalRegistryNamespace

# to template the image name

registry: '{{repl HasLocalRegistry | ternary LocalRegistryHost "proxy.replicated.com" }}'

repository: '{{repl HasLocalRegistry | ternary LocalRegistryNamespace "proxy/my-app/quay.io/my-org" }}/nginx'

tag: 1.24-alpine

```

For more information about how to rewrite image names using the KOTS [HasLocalRegistry](/reference/template-functions-config-context#haslocalregistry), [LocalRegistryHost](/reference/template-functions-config-context#localregistryhost), and [LocalRegistryNamespace](/reference/template-functions-config-context#localregistrynamespace) template functions, including additional examples, see [Support Installations with HelmChart v2](/vendor/helm-native-v2-using).

1. If you support air gap installations, add any images that are referenced in your Backup and Restore resources to the `additionalImages` field of the KOTS Application custom resource. This ensures that the images are included in the air gap bundle for the release so they can be used during the backup and restore process in environments with limited or no outbound internet access. For more information, see [additionalImages](/reference/custom-resource-application#additionalimages) in _Application_.

**Example:**

```yaml

apiVersion: kots.io/v1beta1

kind: Application

metadata:

name: my-app

spec:

additionalImages:

- elasticsearch:7.6.0

- quay.io/orgname/private-image:v1.2.3

```

1. (Optional) Use Velero functionality like [backup](https://velero.io/docs/main/backup-hooks/) and [restore](https://velero.io/docs/main/restore-hooks/) hooks to customize the backup and restore process as needed.

**Example:**

For example, a Postgres database might be backed up using pg_dump to extract the database into a file as part of a backup hook. It can then be restored using the file in a restore hook:

```yaml

podAnnotations:

backup.velero.io/backup-volumes: backup

pre.hook.backup.velero.io/command: '["/bin/bash", "-c", "PGPASSWORD=$POSTGRES_PASSWORD pg_dump -U {{repl ConfigOption "postgresql_username" }} -d {{repl ConfigOption "postgresql_database" }} -h 127.0.0.1 > /scratch/backup.sql"]'

pre.hook.backup.velero.io/timeout: 3m

post.hook.restore.velero.io/command: '["/bin/bash", "-c", "[ -f \"/scratch/backup.sql\" ] && PGPASSWORD=$POSTGRES_PASSWORD psql -U {{repl ConfigOption "postgresql_username" }} -h 127.0.0.1 -d {{repl ConfigOption "postgresql_database" }} -f /scratch/backup.sql && rm -f /scratch/backup.sql;"]'

post.hook.restore.velero.io/wait-for-ready: 'true' # waits for the pod to be ready before running the post-restore hook

```

1. Save and the promote the release to a development channel for testing.

### Extend disaster recovery with custom Velero plugins

> Introduced in Embedded Cluster v2.13.0

You can extend Velero's backup and restore capabilities by configuring custom Velero plugins in the Embedded Cluster Config. This is useful if you need to add support for specific backup scenarios, such as backing up databases like PostgreSQL, MySQL, or other stateful applications that require advanced disaster recovery capabilities.

To configure custom Velero plugins, use the `extensions.velero.plugins` key in the Embedded Cluster Config. For more information, see [Configure Velero Plugins](/reference/embedded-config#configure-velero-plugins) in _Embedded Cluster Config_.

### Enable the disaster recovery feature for your customers

After configuring disaster recovery for your application, you can enable it on a per-customer basis with the **Allow Disaster Recovery (Alpha)** license field.

To enable disaster recovery for a customer:

1. In the Vendor Portal, go to the [Customers](https://vendor.replicated.com/customers) page and select the target customer.

1. On the **Manage customer** page, under **License options**, enable the **Allow Disaster Recovery (Alpha)** field.

When your customer installs with Embedded Cluster, Velero will be deployed if the **Allow Disaster Recovery (Alpha)** license field is enabled.

## Take backups and restore

This section describes how your customers can configure backup storage, take backups, and restore from backups.

### Configure backup storage and take backups in the Admin Console

Customers with the **Allow Disaster Recovery (Alpha)** license field can configure their backup storage location and take backups from the Admin Console.

To configure backup storage and take backups:

1. After installing the application and logging in to the Admin Console, click the **Disaster Recovery** tab at the top of the Admin Console.

1. For the desired S3-compatible backup storage location, enter the bucket, prefix (optional), access key ID, access key secret, endpoint, and region. Click **Update storage settings**.

[View a larger version of this image](/images/ec-version-command.png)

* Any Helm extensions included in the `extensions` field of the Embedded Cluster Config are _not_ included in backups. Helm extensions are reinstalled as part of the restore process. To include Helm extensions in backups, configure the Velero Backup resource to include the extensions using namespace-based or label-based selection. For more information, see [Configure the Velero Custom Resources](#config-velero-resources) below.

* Users can only restore from the most recent backup.

* Velero is installed only during the initial installation process. Enabling the disaster recovery license field for customers after they have already installed will not do anything.

* If the `--admin-console-port` flag was used during install to change the port for the Admin Console, note that during a restore the Admin Console port will be used from the backup and cannot be changed. For more information, see [install](/reference/embedded-cluster-install).

## Configure disaster recovery

This section describes how to configure disaster recovery for Embedded Cluster installations. It also describes how to enable access to the disaster recovery feature on a per-customer basis.

### Configure the Velero custom resources {#config-velero-resources}

This section describes how to set up Embedded Cluster disaster recovery for your application by configuring Velero [Backup](https://velero.io/docs/latest/api-types/backup/) and [Restore](https://velero.io/docs/latest/api-types/restore/) custom resources in a release.

To configure Velero Backup and Restore custom resources for Embedded Cluster disaster recovery:

1. In a new release containing your application files, add a Velero Backup resource. In the Backup resource, use namespace-based or label-based selection to indicate the application resources that you want to be included in the backup. For more information, see [Backup API Type](https://velero.io/docs/latest/api-types/backup/) in the Velero documentation.

:::important

If you use namespace-based selection to include all of your application resources deployed in the `kotsadm` namespace, ensure that you exclude the Replicated resources that are also deployed in the `kotsadm` namespace. Because the Embedded Cluster infrastructure components are always included in backups automatically, this avoids duplication.

:::

**Example:**

The following Backup resource uses namespace-based selection to include application resources deployed in the `kotsadm` namespace:

```yaml

apiVersion: velero.io/v1

kind: Backup

metadata:

name: backup

spec:

# Back up the resources in the kotsadm namespace

includedNamespaces:

- kotsadm

orLabelSelectors:

- matchExpressions:

# Exclude Replicated resources from the backup

- { key: kots.io/kotsadm, operator: NotIn, values: ["true"] }

```

1. In the same release, add a Velero Restore resource. In the `backupName` field of the Restore resource, include the name of the Backup resource that you created. For more information, see [Restore API Type](https://velero.io/docs/latest/api-types/restore/) in the Velero documentation.

**Example**:

```yaml

apiVersion: velero.io/v1

kind: Restore

metadata:

name: restore

spec:

# the name of the Backup resource that you created

backupName: backup

includedNamespaces:

- '*'

```

1. For any image names that you include in your Backup and Restore resources, rewrite the image name using the Replicated KOTS [HasLocalRegistry](/reference/template-functions-config-context#haslocalregistry), [LocalRegistryHost](/reference/template-functions-config-context#localregistryhost), and [LocalRegistryNamespace](/reference/template-functions-config-context#localregistrynamespace) template functions. This ensures that the image name is rendered correctly during deployment, allowing the image to be pulled from the user's local image registry (such as in air gap installations) or through the Replicated proxy registry.

**Example:**

```yaml

apiVersion: velero.io/v1

kind: Restore

metadata:

name: restore

spec:

hooks:

resources:

- name: restore-hook-1

includedNamespaces:

- kotsadm

labelSelector:

matchLabels:

app: example

postHooks:

- init:

initContainers:

- name: restore-hook-init1

image:

# Use HasLocalRegistry, LocalRegistryHost, and LocalRegistryNamespace

# to template the image name

registry: '{{repl HasLocalRegistry | ternary LocalRegistryHost "proxy.replicated.com" }}'

repository: '{{repl HasLocalRegistry | ternary LocalRegistryNamespace "proxy/my-app/quay.io/my-org" }}/nginx'

tag: 1.24-alpine

```

For more information about how to rewrite image names using the KOTS [HasLocalRegistry](/reference/template-functions-config-context#haslocalregistry), [LocalRegistryHost](/reference/template-functions-config-context#localregistryhost), and [LocalRegistryNamespace](/reference/template-functions-config-context#localregistrynamespace) template functions, including additional examples, see [Support Installations with HelmChart v2](/vendor/helm-native-v2-using).

1. If you support air gap installations, add any images that are referenced in your Backup and Restore resources to the `additionalImages` field of the KOTS Application custom resource. This ensures that the images are included in the air gap bundle for the release so they can be used during the backup and restore process in environments with limited or no outbound internet access. For more information, see [additionalImages](/reference/custom-resource-application#additionalimages) in _Application_.

**Example:**

```yaml

apiVersion: kots.io/v1beta1

kind: Application

metadata:

name: my-app

spec:

additionalImages:

- elasticsearch:7.6.0

- quay.io/orgname/private-image:v1.2.3

```

1. (Optional) Use Velero functionality like [backup](https://velero.io/docs/main/backup-hooks/) and [restore](https://velero.io/docs/main/restore-hooks/) hooks to customize the backup and restore process as needed.

**Example:**

For example, a Postgres database might be backed up using pg_dump to extract the database into a file as part of a backup hook. It can then be restored using the file in a restore hook:

```yaml

podAnnotations:

backup.velero.io/backup-volumes: backup

pre.hook.backup.velero.io/command: '["/bin/bash", "-c", "PGPASSWORD=$POSTGRES_PASSWORD pg_dump -U {{repl ConfigOption "postgresql_username" }} -d {{repl ConfigOption "postgresql_database" }} -h 127.0.0.1 > /scratch/backup.sql"]'

pre.hook.backup.velero.io/timeout: 3m

post.hook.restore.velero.io/command: '["/bin/bash", "-c", "[ -f \"/scratch/backup.sql\" ] && PGPASSWORD=$POSTGRES_PASSWORD psql -U {{repl ConfigOption "postgresql_username" }} -h 127.0.0.1 -d {{repl ConfigOption "postgresql_database" }} -f /scratch/backup.sql && rm -f /scratch/backup.sql;"]'

post.hook.restore.velero.io/wait-for-ready: 'true' # waits for the pod to be ready before running the post-restore hook

```

1. Save and the promote the release to a development channel for testing.

### Extend disaster recovery with custom Velero plugins

> Introduced in Embedded Cluster v2.13.0

You can extend Velero's backup and restore capabilities by configuring custom Velero plugins in the Embedded Cluster Config. This is useful if you need to add support for specific backup scenarios, such as backing up databases like PostgreSQL, MySQL, or other stateful applications that require advanced disaster recovery capabilities.

To configure custom Velero plugins, use the `extensions.velero.plugins` key in the Embedded Cluster Config. For more information, see [Configure Velero Plugins](/reference/embedded-config#configure-velero-plugins) in _Embedded Cluster Config_.

### Enable the disaster recovery feature for your customers

After configuring disaster recovery for your application, you can enable it on a per-customer basis with the **Allow Disaster Recovery (Alpha)** license field.

To enable disaster recovery for a customer:

1. In the Vendor Portal, go to the [Customers](https://vendor.replicated.com/customers) page and select the target customer.

1. On the **Manage customer** page, under **License options**, enable the **Allow Disaster Recovery (Alpha)** field.

When your customer installs with Embedded Cluster, Velero will be deployed if the **Allow Disaster Recovery (Alpha)** license field is enabled.

## Take backups and restore

This section describes how your customers can configure backup storage, take backups, and restore from backups.

### Configure backup storage and take backups in the Admin Console

Customers with the **Allow Disaster Recovery (Alpha)** license field can configure their backup storage location and take backups from the Admin Console.

To configure backup storage and take backups:

1. After installing the application and logging in to the Admin Console, click the **Disaster Recovery** tab at the top of the Admin Console.

1. For the desired S3-compatible backup storage location, enter the bucket, prefix (optional), access key ID, access key secret, endpoint, and region. Click **Update storage settings**.

[View a larger version of this image](/images/dr-backup-storage-settings.png)

1. (Optional) From this same page, configure scheduled backups and a retention policy for backups.

[View a larger version of this image](/images/dr-backup-storage-settings.png)

1. (Optional) From this same page, configure scheduled backups and a retention policy for backups.

[View a larger version of this image](/images/dr-scheduled-backups.png)

1. In the **Disaster Recovery** submenu, click **Backups**. Backups can be taken from this screen.

[View a larger version of this image](/images/dr-scheduled-backups.png)

1. In the **Disaster Recovery** submenu, click **Backups**. Backups can be taken from this screen.

[View a larger version of this image](/images/dr-backups.png)

### Restore from a backup

To restore from a backup:

1. SSH onto a new machine where you want to restore from a backup.

1. Download the Embedded Cluster installation assets for the version of the application that was included in the backup. You can find the command for downloading Embedded Cluster installation assets in the **Embedded Cluster install instructions dialog** for the customer. For more information, [Online Installation with Embedded Cluster](/enterprise/installing-embedded).

:::note

The version of the Embedded Cluster installation assets must match the version that is in the backup. For more information, see [Limitations and Known Issues](#limitations-and-known-issues).

:::

1. Run the restore command:

```bash

sudo ./APP_SLUG restore

```

Where `APP_SLUG` is the unique application slug.

Note the following requirements and guidance for the `restore` command:

* If the installation is behind a proxy, the same proxy settings provided during install must be provided to the restore command using `--http-proxy`, `--https-proxy`, and `--no-proxy`. For more information, see [install](/reference/embedded-cluster-install).

* If the `--cidr` flag was used during install to the set IP address ranges for Pods and Services, this flag must be provided with the same CIDR during the restore. If this flag is not provided or is provided with a different CIDR, the restore will fail with an error message telling you to rerun with the appropriate value. However, it will take some time before that error occurs. For more information, see [install](/reference/embedded-cluster-install).

* If the `--local-artifact-mirror-port` flag was used during install to change the port for the Local Artifact Mirror (LAM), you can optionally use the `--local-artifact-mirror-port` flag to choose a different LAM port during restore. For example, `restore --local-artifact-mirror-port=50000`. If no LAM port is provided during restore, the LAM port that was supplied during installation will be used. For more information, see [install](/reference/embedded-cluster-install).

You will be guided through the process of restoring from a backup.

1. When prompted, enter the information for the backup storage location.

[View a larger version of this image](/images/dr-restore.png)

1. When prompted, confirm that you want to restore from the detected backup.

[View a larger version of this image](/images/dr-restore-from-backup-confirmation.png)

After some time, the Admin console URL is displayed:

[View a larger version of this image](/images/dr-restore-admin-console-url.png)

1. (Optional) If the cluster should have multiple nodes, go to the Admin Console to get a join command and join additional nodes to the cluster. For more information, see [Manage Multi-Node Clusters with Embedded Cluster](/enterprise/embedded-manage-nodes).

1. Type `continue` when you are ready to proceed with the restore process.

[View a larger version of this image](/images/dr-restore-continue.png)

After some time, the restore process completes.

If the `restore` command is interrupted during the restore process, you can resume by rerunning the `restore` command and selecting to resume the previous restore. This is useful if your SSH session is interrupted during the restore.

[View a larger version of this image](/images/dr-backups.png)

### Restore from a backup

To restore from a backup:

1. SSH onto a new machine where you want to restore from a backup.

1. Download the Embedded Cluster installation assets for the version of the application that was included in the backup. You can find the command for downloading Embedded Cluster installation assets in the **Embedded Cluster install instructions dialog** for the customer. For more information, [Online Installation with Embedded Cluster](/enterprise/installing-embedded).

:::note

The version of the Embedded Cluster installation assets must match the version that is in the backup. For more information, see [Limitations and Known Issues](#limitations-and-known-issues).

:::

1. Run the restore command:

```bash

sudo ./APP_SLUG restore

```

Where `APP_SLUG` is the unique application slug.

Note the following requirements and guidance for the `restore` command:

* If the installation is behind a proxy, the same proxy settings provided during install must be provided to the restore command using `--http-proxy`, `--https-proxy`, and `--no-proxy`. For more information, see [install](/reference/embedded-cluster-install).

* If the `--cidr` flag was used during install to the set IP address ranges for Pods and Services, this flag must be provided with the same CIDR during the restore. If this flag is not provided or is provided with a different CIDR, the restore will fail with an error message telling you to rerun with the appropriate value. However, it will take some time before that error occurs. For more information, see [install](/reference/embedded-cluster-install).

* If the `--local-artifact-mirror-port` flag was used during install to change the port for the Local Artifact Mirror (LAM), you can optionally use the `--local-artifact-mirror-port` flag to choose a different LAM port during restore. For example, `restore --local-artifact-mirror-port=50000`. If no LAM port is provided during restore, the LAM port that was supplied during installation will be used. For more information, see [install](/reference/embedded-cluster-install).

You will be guided through the process of restoring from a backup.

1. When prompted, enter the information for the backup storage location.

[View a larger version of this image](/images/dr-restore.png)

1. When prompted, confirm that you want to restore from the detected backup.

[View a larger version of this image](/images/dr-restore-from-backup-confirmation.png)

After some time, the Admin console URL is displayed:

[View a larger version of this image](/images/dr-restore-admin-console-url.png)

1. (Optional) If the cluster should have multiple nodes, go to the Admin Console to get a join command and join additional nodes to the cluster. For more information, see [Manage Multi-Node Clusters with Embedded Cluster](/enterprise/embedded-manage-nodes).

1. Type `continue` when you are ready to proceed with the restore process.

[View a larger version of this image](/images/dr-restore-continue.png)

After some time, the restore process completes.

If the `restore` command is interrupted during the restore process, you can resume by rerunning the `restore` command and selecting to resume the previous restore. This is useful if your SSH session is interrupted during the restore.